25 AI Implementation Challenges in Web Development: Guardrails That Work on Customer-Facing Websites

Deploying AI on customer-facing websites is no longer a proof-of-concept experiment — it is a production-grade engineering and governance problem. Done without discipline, it erodes user trust, invites regulatory scrutiny, and introduces liability vectors that no legal team wants to inherit. This article consolidates actionable guardrails from practitioners who have shipped AI systems at scale, mapped against the real failure modes they were designed to contain. Twenty-five strategies. Zero tolerance for vagueness.

1. Stack Checkpoints for Layered Rejection

A single content filter operating at the output layer is insufficient for production-grade AI systems. Layered rejection architecture positions independent checkpoints at the input, retrieval, generation, and output stages — each capable of terminating a request without relying on the next layer to compensate for the last. This defense-in-depth approach mirrors the security principles long established in network infrastructure and applies them to the inference pipeline. When one layer fails silently, the next layer surfaces the problem before it reaches the end user. Teams that deploy only output-layer moderation tend to discover this gap the hard way, typically in a post-incident review.

Implementation note: map each checkpoint to a specific failure mode it is designed to intercept. A checkpoint without a named threat model is overhead, not protection.

2. Defend Against Prompt Injection

Prompt injection represents one of the most operationally dangerous vectors in customer-facing AI deployment. Adversarial users — or, increasingly, malicious content embedded in third-party data sources — craft inputs designed to override system-level instructions, extract confidential context, or redirect the model's behavior entirely. Effective defense requires treating all user input as untrusted data, regardless of authentication state. This includes structural separation of system prompts from user input at the protocol level, input sanitization pipelines tuned to detect instruction-like patterns, and canary tokens embedded in system prompts that trigger alerts when reflected in model outputs.

The threat surface expands dramatically in RAG-based systems where retrieved documents contribute to the effective prompt. A document fetched from an external URL can contain adversarial instructions indistinguishable from legitimate content at the retrieval stage. Sandboxing retrieval contexts and validating retrieved chunks against a schema before injection into the prompt context reduces this exposure substantially.

3. Force Structure with Strict Templates

Unconstrained generation produces outputs of variable form, which creates compounding problems downstream: inconsistent tone, variable field completeness, unpredictable length, and schema violations that break integrations. Strict output templates resolve these failure modes by reducing the model's degrees of freedom at generation time. When the expected output structure is enforced through grammar-constrained decoding, JSON schema validation, or function-calling interfaces, the probability of structurally anomalous outputs drops to near-zero. This is not merely a formatting preference — it is a reliability primitive. Any AI component that feeds structured data into another system requires it.

4. Enforce Retrieval-Only Answers

In domains where factual accuracy is non-negotiable — legal, medical, financial, or product-specific content — the model should not be permitted to synthesize answers from parametric knowledge alone. Retrieval-only architectures mandate that every assertion in a generated response be traceable to a retrieved source chunk. This constraint eliminates the hallucination vectors that emerge when a language model fills knowledge gaps with plausible-sounding fabrications. Citation linking — surfacing the source document alongside the generated response — adds a verification layer that allows users to audit the model's reasoning chain independently, which is both a trust signal and a liability buffer.

5. Block False Compliance Claims

A model that confidently states regulatory approval, certification status, or compliance alignment it cannot verify is a liability in motion. False compliance claims represent a distinct category of harmful output that standard toxicity filters are not designed to catch. Blocking this failure mode requires domain-specific claim classifiers trained on the language patterns of regulatory assertion — phrases like "this product is FDA-approved," "compliant with GDPR," or "meets ISO standards" — combined with an allowlist of claims that have been verified against authoritative sources and pre-approved for model output. Claims outside the allowlist must route to human review before delivery.

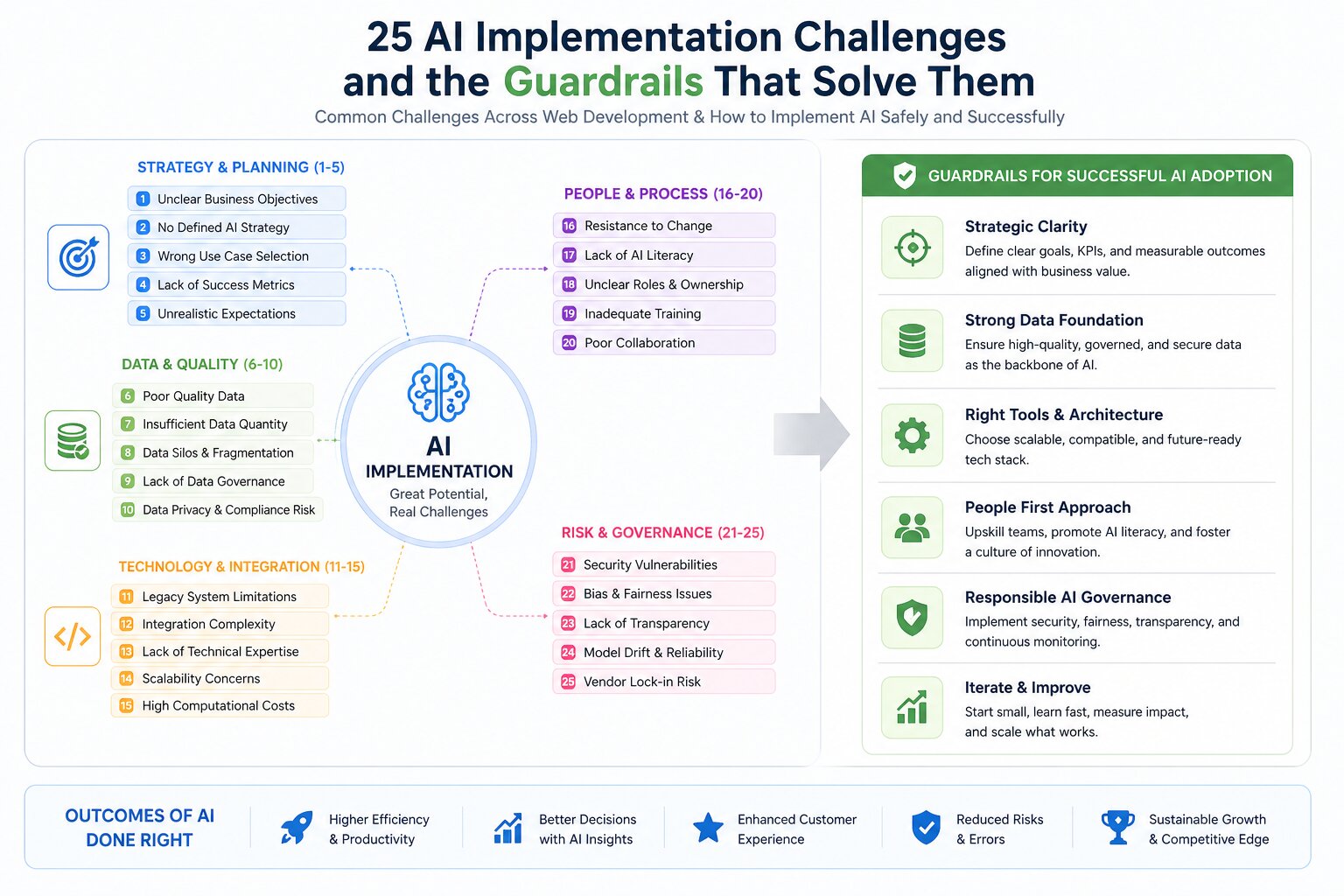

Key AI challenges and the guardrails that solve them

6. Centralize Truth in a Knowledge Graph

Distributed knowledge sources — product documentation scattered across wikis, PDFs, and legacy CMSs — create synchronization gaps that surface as contradictions in AI-generated responses. A centralized knowledge graph resolves this by establishing a single authoritative source from which all retrieval operations draw. Entity relationships are explicitly encoded, version-controlled, and auditable. When a product specification changes, the graph is updated in one place, and all downstream AI components inherit the correction without manual prompt engineering. Organizations that skip this infrastructure step discover it when users receive contradictory answers about the same product feature from the same AI system in the same session.

7. Use Live Facts First for Voice

Voice interfaces compress the trust cycle. A spoken claim sounds more authoritative than a written one, and users are less likely to cross-reference a verbal answer than a text response. For voice AI deployed on customer-facing surfaces, live data retrieval must take priority over parametric knowledge for any fact that carries a timestamp dependency: pricing, availability, hours of operation, policy status, appointment slots. Stale parametric data delivered confidently over voice is a compounding trust failure — users act on it, discover the error in the real world, and attribute the failure to the brand, not the model. Live-data-first architectures prevent this by treating parametric knowledge as a fallback of last resort, not a primary source.

8. Plan Incrementally and Limit Scope

Scope inflation is among the most reliable predictors of AI deployment failure. Teams that attempt to automate the full spectrum of customer interactions simultaneously produce systems that handle no category of query reliably. Incremental scoping — beginning with the highest-volume, lowest-risk query category and expanding only after achieving measurable accuracy benchmarks — allows teams to build operational confidence, tune retrieval pipelines, and identify edge cases before they scale into systemic failures. Phased deployment also enables meaningful A/B measurement of AI performance against baseline, which is impossible when the system has no defined boundaries.

When AI coding agents are tasked with adding new functionality or resolving a bug, they carry an inherent risk of introducing regressions into pre-existing features. The agent naturally scopes its attention toward the specific ask presented by the engineer, without accounting for the broader ramifications across the existing codebase.

Engage Plan Mode before committing to Agent Mode — allow the AI to architect the implementation strategy and articulate its design decisions, then iterate and refine that plan before a single line of code is written. Deliver features in discrete phases and share only the context that is directly relevant to each phase; scope creep is a problem that affects AI workflows just as readily as human ones.

Additionally, invest time in independently researching the latest frameworks and patterns — particularly for backend systems — and explicitly instruct the AI to apply current best practices. An older knowledge checkpoint embedded in the underlying model may generate code that is suboptimal or anchored to a deprecated version of the framework in question.

-Advitya Gemawat, Machine Learning Engineer, Microsoft Corporation

9. Wall Off All Regulatory Content

Regulatory content — financial disclosures, medical contraindications, legal terms, insurance policy language — carries a different risk profile than general content. The margin for imprecision is zero, and the consequences of paraphrased, compressed, or synthesized regulatory language include regulatory sanctions, class action exposure, and reputational damage. The appropriate guardrail is categorical isolation: AI systems should not generate, paraphrase, or synthesize regulatory content under any circumstances. These passages must be served verbatim from approved text stores, with AI involvement limited to retrieval and presentation context. Any AI component that touches regulatory content in generative mode requires a separate legal sign-off process, not just an engineering review.

10. Apply Access Controls and Field Redaction

AI systems connected to user data introduce access control surfaces that conventional application security frameworks are not designed to evaluate. A model with read access to a user record may, through multi-turn context accumulation or indirect prompt injection, expose fields it was never intended to surface. Field-level redaction — stripping sensitive attributes from the context before they reach the model's attention window — is the correct primitive here. Role-based access controls must be enforced at the data retrieval layer, not the application layer, and must be validated under adversarial conditions before deployment. The assumption that a model will "know not to repeat" sensitive data it has encountered in context is not a control — it is a wishful default that fails under minimal adversarial pressure.

11. Pair Draft Automation with Expert Review

Fully automated content pipelines — AI generates, systems publish — are appropriate for low-stakes, high-volume content categories like product descriptions with narrow factual surface area. For any content that touches professional expertise, draft automation should be understood as a velocity tool, not a quality assurance system. The expert reviewer in this model is not a copy editor correcting grammar; they are a subject matter validator confirming that the AI's synthesis is factually sound, contextually appropriate, and free of the subtle distortions that emerge when a model generalizes from imperfect training data. Workflow tooling that presents the AI draft alongside the source material it was drawn from accelerates this review cycle significantly.

12. Filter Sensitive Details in Real Time

Real-time output filtering — operating at the token stream level rather than post-generation — provides the lowest-latency intervention for sensitive detail suppression. Pattern-matched filters for PII categories (SSNs, card numbers, phone numbers, email addresses), credential-adjacent language, and domain-specific sensitive terms can be applied to the streaming output before it reaches the client render layer. This approach is preferable to post-processing because it prevents the sensitive content from ever being transmitted, not merely displayed. In multi-modal systems where AI-generated text feeds downstream processes, this distinction matters — a filtered display field does not guarantee the underlying data was not logged, cached, or indexed.

13. Publish an Explicit Model Usage Policy

Transparency about AI use is no longer optional goodwill — it is a baseline user expectation and, in an increasing number of jurisdictions, a regulatory requirement. A model usage policy published at the point of interaction defines what the AI system does, what data it accesses, how responses are generated, and what review processes apply. The policy is not a legal disclaimer buried in terms of service — it is a functional document written for the user who wants to understand what they are interacting with. Organizations that preemptively publish this documentation demonstrate operational maturity and reduce the surface area for trust-damaging revelations that emerge when undisclosed AI use is discovered by journalists or regulators.

14. Gate Leads with Geographic Verification

AI-driven lead qualification and product recommendation flows that operate across geographies must account for regulatory asymmetry. A product recommendation that is entirely appropriate in one jurisdiction may be legally restricted, require additional disclosures, or be prohibited in another. Geographic verification at the lead intake stage — not as a UI courtesy feature, but as a hard gate that routes users to jurisdiction-appropriate flows — prevents the scenario where an AI system confidently provides product guidance that is non-compliant for the user's actual location. IP-based geolocation, supplemented by declared location inputs, provides sufficient signal for this routing logic in most implementations.

How Teckgeekz Built 50+ City Pages That Actually Rank

15. Fix Search with a Clean Index

AI-powered search is only as accurate as the index it queries. Duplicate entries, orphaned documents, inconsistent metadata, and stale content in the index translate directly into retrieval errors that no amount of generative sophistication can correct. Index hygiene — deduplication, version control, metadata standardization, and scheduled staleness audits — is the unglamorous infrastructure work that determines retrieval quality at the baseline. Organizations that invest in model capability while neglecting index integrity routinely observe a performance ceiling they cannot explain, because the problem is not in the model. The signal-to-noise ratio of the index is the binding constraint, and it must be addressed before retrieval architecture optimization yields meaningful returns.

16. Add Keyword Barriers and Human Audits

Keyword barriers — blocklists and allowlists applied at the query and response layer — provide deterministic guardrails in domains where probabilistic model behavior is insufficient. For high-stakes content categories, deterministic rules outperform learned classifiers because they are auditable, predictable, and legally defensible. Human audit cycles — structured reviews of AI outputs against defined quality criteria, conducted on a scheduled basis rather than reactively — surface the slow drift that occurs when model behavior degrades gradually over time or as the document corpus evolves. An audit cycle that produces no findings is still valuable: it is documented evidence of a functioning oversight process, which matters in regulatory contexts.

17. Restructure Pages for AI Clarity

AI retrieval systems interpret page structure as a signal for content hierarchy and relevance. Poorly structured pages — dense walls of text, missing semantic markup, ambiguous headings, embedded tables without context — produce retrieval outputs of degraded quality even when the underlying content is accurate. Restructuring content for AI consumption is not synonymous with restructuring it for SEO; the optimization targets are distinct. Clear semantic headings, explicit statement-level factual claims, structured data markup, and consistent entity naming across a document corpus materially improve the accuracy and coherence of AI-generated responses that draw on that content. This is a content operations intervention with measurable retrieval quality impact.

18. Explain Scores and Hide Low Confidence

AI scoring systems — relevance scores, risk scores, recommendation confidence scores — displayed to end users without explanatory context produce two predictable failure modes: over-trust in high scores and under-trust in accurate-but-opaque outputs. Score explainability, implemented as a lightweight natural language summary of the factors contributing to a score, reduces both failure modes simultaneously. The second guardrail — suppressing or clearly flagging outputs below a defined confidence threshold rather than presenting them at face value — prevents low-quality inferences from reaching users dressed as authoritative outputs. A confident-looking response with a 0.45 retrieval similarity score is not a response: it is a liability.

19. Red-Team Before Every Release

Automated evaluation suites measure known failure modes against known test cases. Red-teaming surfaces the failure modes that were not anticipated when the test suite was designed. Pre-release red-teaming — structured adversarial testing conducted by individuals with no stake in demonstrating the system works — is the single highest-leverage quality intervention available to AI deployment teams. Effective red-teaming requires diverse attack vectors: jailbreak attempts, edge-case domain queries, multi-turn manipulation sequences, and real user queries from production logs of prior systems. Findings should gate release decisions, not merely inform them. A red-team finding that is documented, noted, and shipped around is a release commitment that the organization has accepted a known risk.

20. Deliver Instant Photo-Based Junk Estimates

In sectors like waste management, logistics, and residential services, AI-powered visual estimation tools — where users submit a photo and receive an instant price range — collapse the friction-heavy quote request process into a near-zero-effort interaction. The guardrail here is expectation framing: estimates derived from image analysis must be explicitly scoped as indicative ranges, not binding quotes. Confidence intervals should be surfaced in the UI, and the estimation model should be tuned to slight over-estimation rather than under-estimation to avoid the trust damage of a final price that significantly exceeds the AI-generated estimate. Visual estimation is a conversion tool with a defined error tolerance; treating it as a precision instrument breaks both the user experience and the economics.

21. Provide Visual References to Shape Design

AI design assistants — tools that generate layout suggestions, color palette recommendations, or spatial planning outputs — perform significantly better when they operate against a constrained visual reference set rather than unbounded parametric space. Providing curated visual references — style guides, approved brand assets, domain-specific exemplar sets — shapes AI design output toward the desired aesthetic envelope without requiring users to develop vocabulary-precise prompts. This guardrail also reduces the variance of outputs, which matters when the AI design tool is deployed to users with minimal design background who cannot reliably evaluate whether an AI-generated option is appropriate for the brand context.

22. Tighten Boundaries and Enable User Flags

Behavioral boundaries on customer-facing AI systems should be actively maintained, not set once at launch. User flagging mechanisms — allowing end users to signal problematic outputs through a low-friction interface — provide a continuous feedback stream that surfaces boundary failures faster than any automated monitoring system. The data generated by user flags is operationally valuable: it identifies the specific input patterns and response categories where the system's behavior diverges from user expectations, which is directly actionable information for prompt engineers and fine-tuning teams. Flags should route to a human review queue with defined SLA targets, not into an analytics dashboard that is reviewed quarterly.

23. Constrain Output to Approved Paths

Agentic AI components — systems that take actions, not just generate text — require the most aggressive output constraints. A language model generating a product description that contains an error is recoverable. A language model executing a workflow step that submits an incorrect order, triggers an erroneous refund, or modifies a production record is not recoverable without operational cost and potentially user harm. Approved-path constraints define the complete set of actions an agentic component is permitted to execute, expressed as an explicit allowlist, with any action outside the allowlist requiring human authorization. The constraint surface should be as narrow as the use case requires, not as broad as the technology permits.

24. Label AI Replies and Verify Production State

Every AI-generated response on a customer-facing surface should carry an explicit AI attribution label. This is not a hedge — it is a trust primitive. Users who know they are interacting with an AI calibrate their follow-up behavior differently than users who believe they are in a human conversation, and that calibration difference produces better interaction outcomes, not worse. Separately, production state verification — confirming that the AI component operating in production is the exact version that passed QA, using the exact prompt configurations and knowledge sources that were reviewed — is a discipline that most teams underinvest in until they experience a silent regression caused by an unintended configuration change. Deployment manifests, hash-verified prompt files, and environment-specific smoke tests address this gap directly.

25. Require a Clear Reader Outcome

Every piece of AI-generated content deployed on a customer-facing surface should be authored — or evaluated — against a defined reader outcome. "What should the reader understand, decide, or do after consuming this content?" is the question that separates purposeful AI content from volume-filling output. AI systems optimized purely for content production metrics — words per hour, pages per week — without outcome alignment produce content that occupies space without generating value. Over time, this degrades the perceived quality of the entire content surface, dilutes SEO equity, and erodes user trust in the brand's knowledge resources. Outcome-driven content briefs, applied as constraints at the generation stage, are the corrective mechanism. If the intended outcome cannot be stated before generation begins, generation should not begin.

The Discipline Is the Differentiator

The organizations winning with AI on customer-facing websites are not the ones with access to the most capable models. They are the ones that have built the governance infrastructure to deploy those models responsibly — and the operational discipline to maintain that infrastructure as the technology, the regulatory environment, and their own business requirements evolve.

These 25 guardrails are not a checklist to be completed and archived. They are an operational framework to be implemented iteratively, reviewed continuously, and updated as new failure modes emerge. The model that passes red-teaming in Q1 may not pass in Q3. The compliance landscape that was clear at launch may be ambiguous after a regulatory update. The knowledge graph that was accurate at indexing time drifts the moment the business changes something.

AI implementation in web development is not a deployment problem — it is a continuous operations problem. The teams that understand this distinction are the ones building systems that still work, still comply, and still earn user trust twelve months after launch.

Jeffrey Mathew

Founder & CEO • Travel Marketing Specialist

"With over 14 years of dominance in the travel and tech sectors, Jeffrey Mathew has engineered growth for hundreds of OTAs and airlines worldwide. He specializes in the intersection of Performance PPC and Agentic AI, building high-performance digital ecosystems for modern brands."

Start a Conversation

Ready to Elevate Your Business?

Fill out the form below and let's discuss how Teckgeekz can help you reach your goals.

Describe your project to unlock real-time AI service recommendations and matching portfolio cases.

Our Trusted Partners